Text generator ChatGPT is the fastest-growing consumer app ever, and it’s still growing rapidly.

But the dirty secret of AI is that humans are still needed to create, label and structure training data — and training data is very expensive. The dark side of this is that an exponential feedback loop is being created where AI is a surveillance technology. And so, managing the humans in the AI loop is crucial.

Some experts believe that when (potentially) robots take over the world, they’d better be controlled by decentralized networks. And humans must be incentivized to prepare the data sets. Blockchain and tokens can help… but can blockchain save humanity from AI?

ChatGPT is just regurgitated data

ChatGPT is a big deal according to famed AI researcher Ben Goertzel, given that “the ChatGPT thing caused the Google founders to show up at the office for the first time in years!” he laughs. Goertzel is the founder of blockchain-based AI marketplace SingularityNET and an outspoken proponent of artificial general intelligence (AGI) — computers thinking for themselves. That means he sees where ChatGPT falls short more clearly than most.

“What’s interesting about ChatGPT and other neuro models is that they achieve a certain amount of generality without having much ability to generalize. They achieve a general scope of ability relative to an individual human by having so much training data.”

Read also: How to prevent AI from ‘annihilating humanity’ using blockchain

In other words, ChatGPT is really one function achieved by the brute force of having so much data. “This is not the way humans achieve breadth by iterative acts of creative generalization,” he says, adding, “It’s a hack; it’s a beautiful hack; it’s very cool. I think it is a big leap forward.”

He’s not discounting where that hack can take us either. “I won’t be shocked if GPT-7 can do 80% of human jobs,” he says. “That’s big but it doesn’t mean they can be human-level thinking machines. But they can do a majority of human-level jobs.”

Logic predicated on experience remains harder for AI than scraping the internet. Predicate logic means that humans know how to open bottle caps, for example, but AIs need trillions of data to learn that simple task. And good large language models (LLMs) can still turn language into presumptive logic, including paraconsistent logic, or self-contradictory logic, explains Goertzel.

“If you feed them the whole web, almost anything you ask them is covered somewhere on the web.”

Goertzel notes that means part of Magazine’s questioning is redundant.

“I’ve been asked the same questions about ChatGPT 10 times in the last three weeks, so we could’ve just asked ChatGPT what I think about ChatGPT. Neuromodels can generate everything I said in the last two months, I don’t even need to be saying it.”

Goertzel is important in AI thinking because he specializes in AGI. He says that he and 90% of his AGI colleagues think LLMs like ChatGPT are partly a distraction from this goal. But he adds LLMs can also contribute to and accelerate the work on all kinds of innovation that could play a role in AGIs. For example, LLMs will expedite the advancement of coding. LLMs can even help ordinary people with no coding abilities to build a phone or web app. That means non-tech founders can use LLMs to build tech startups. “AI should democratize the creation of software technology and then a little bit down the road hardware technology.”

Goertzel founded SingularityNET as an attempt to use blockchain and open-source technology to distribute access to the tech that controls AGIs to everyone, rather than let it stay in the hands of monopolies. Goertzel notes that ChatGPT and other text apps deploy publicly viewable open-source algorithms. And so, the security infrastructure for their data sets and how users participate in this tech revolution is now at a crucial juncture.

For that matter, so is AI development more widely. In March, OpenAI co-founder Elon Musk and more than 1,000 other tech leaders called for a halt to the development of AI or rolling out systems more powerful than GPT-4. Their open letter warned of “profound risks to society and humanity.” The letter argued the pause would provide time to implement “shared safety protocols” for AI systems. “If such a pause cannot be enacted quickly, governments should step in and institute a moratorium,” they posited.

Goertzel is more of an optimist about the tech’s potential to improve our lives rather than destroy them, but he’s been working on this stuff since the 1970s.

Reputation systems needed

Humayun Sheikh was a founding investor in the famed AI research lab DeepMind where he supported commercialization for early-stage AI and deep neural network technology. Currently, he leads Fetch.ai as CEO and founder. It’s a startup developing an autonomous future with deep tech.

He argues that the intersection between blockchain and AI is economically driven, as the funding required to train AI models is prohibitively expensive except for very large organizations. “The entire premise behind crypto is the democratization of technology and access to finance. Rather than having one monopolized entity have the entire ownership of a major AI model, we envision the ownership to be divided among the people who contributed to its development.”

“One way we can absolutely encourage the people to stay in the loop is to involve them in the development of AI from the start, which is why we believe in decentralizing AI technology. Whether it’s people training AI from the start or having them test and validate AI systems, ensuring regular people can take ownership of the AI model is a strong way to keep humans in the loop. And we want to do this while keeping this democratization grounded in proper incentivization mechanisms.”

One approach to this is via emerging reputation systems and decentralized social networks. For example, SingularityNet spin-off Rejuve is tokenizing and crowdsourcing bio data submissions from individuals in the hope of using AI to analyze and cross-match this with animal and insect data in the hope of discovering which parts of the genome can make us live longer. It’s an AI-driven, Web3-based longevity economy. Open science should be paid is the thought and data depositors should be rewarded for their contributions.

“The development of AI is dependent on human training. Reputation systems can deliver quality assurance for the data, and decentralized social networks can ensure that a diverse slate of thoughts and views are included in the development process. Acceleration of AI adoption will bring forth the challenge of developing un-opinionated AI tech.”

Blockchain-based AI governance can also help, argues Sheikh, who says it ensures transparency and decentralized decision-making via an indisputable record of the data collected and decisions made that can be seen by everyone. But blockchain technology is only one piece of the puzzle. Rules and standards, as we see in DAOs, are always going to be needed for trustworthy governance,” he says.

Goertzel notes that “you can’t buy and sell someone else’s reputation,” and tokens have network effects. Blockchain-based reputation systems for AI can ensure consumers can tell the difference between AI fakes and real people but also ensure transparency so that AI model builders can be held accountable for their AI constructions. In this view there needs to be some standard for tokenized measurement of reputation adopted across the blockchain community and then the mainstream tech ecosystem.

And in turn, reputation systems can expedite AI innovations. “This is not the path to quick money but it is part of the path for blockchain to dominate the global economy. There’s a bit of a tragedy of the commons with blockchains in the reputation space. Everyone will benefit from a shared reputation system.”

Blockchains for data set management

Data combined with AI is good for many things — it can diagnose lung cancer — but governments around the world are very concerned with how to govern data.

The key issue is who owns the data sets. The distinctions between open and closed sources are blurred, and their interactions have become very subtle. AI algorithms are usually open-source, but the parameters of the data sets and the data sets themselves are usually proprietary and closed, including for ChatGPT.

The public doesn’t know what data was used to train ChatGPT-4, so even though the algorithms are public, the AI can’t be replicated. Various people have theorized it was trained using data sets including Google and Twitter — meanwhile, Google denied it trained its own AI called Bard with data and conversations with ChatGPT, further muddying the waters of who owns what and how.

Famed AI VC Kai-Fu Lee often says open-source AI is the greatest human collaboration in history, and AI research papers usually contain their data sets for reproducibility, or for others to copy. But despite Lee’s statements, data, when attached to academic research, is often mislabelled and hard to follow “in the most incomprehensible, difficult and annoying way,” says Goertzel. Even open data sets, such as for academic papers, can be unstructured, mislabelled, unhelpful and generally hard to replicate.

So, there is clearly a sweet spot in data pre-processing in AI meets blockchain. There’s an opportunity for crypto firms and DAOs to create the tools for the decentralized infrastructure for cleaning up training data sets. Open source code is one thing, but protection of the data is crucial.

“You need ways to access live AI models, but in the end, someone has to pay for the computer running the process,” notes Goertzel. This could mean making users pay for AI access via a subscription model, he says, but tokenomics are a natural fit. So, why not incentivize good data sets for further research? “Data analysis pipelines” for things like genomics data could be built by crypto firms. LLMs could do this stuff well already, but “most of these pre-processing steps could be done better by decentralized computers,” says Goertzel, “but it’s a lot of work to build it.”

Read also

Human-AI collaboration: Oceans of data needing responsible stewards

One practical way to think about AI-human collaboration then is the idea of “computer-aided design” (CAD), says Trent McConaghy, the Canadian founder of Ocean Protocol. Engineers have benefited from AI-powered CAD since the 1980s. “It’s an important framing: It’s humans working in the loop with computers to accomplish goals while leveraging the strengths of both,” he says.

McConaughy started working in AI in the 1990s for the Canadian government and spent 15 years building AI-powered CAD tools for circuit design. He wrote one of the very first serious articles about blockchains for AI in 2016.

CAD gives us a practical framing for AI-human collaboration. But these AI-powered CAD tools still need data.

McConaghy founded Ocean Protocol in 2017 to address the issue. Ocean Protocol is a public utility network to securely share AI data while preserving privacy. “It’s an AI play using blockchain, and it’s about democratizing data for the planet.” Impressively, it’s the sixth-most active crypto project on GitHub.

Blockchain has a lot to say about getting data into the hands of the average person. Like Goertzel, McConaghy believes that distributed computers can make an important contribution to protecting AI from unsavory uses. IPFS, Filecoin, Ocean Protocol and other decentralized data controllers have led this mission for the past few years.

Data farming at Ocean already incentivizes people to curate data assets that they think will have a high volume of activity for AI development. Examples include enterprise data marketplace Acentrik, AI assistants for organizations outfit Algovera, and decentralized data science competitions protocol Desights. The “problem for AI people is getting more data and the provenance of that data,” McConaghy says.

Blockchain can help AIs with the secure sharing of data, (the raw training data, the models and the raw training predictions) with immutability, provenance, custody, censorship resistance and privacy.

McConaghy sees this as a huge plus for integrating the two. He grew up playing ice hockey and driving tractors and hacking computers in Saskatchewan, but he always remained an “AI nerd by profession.” “AI converts data to value, but humans must decide which data assets might be good.”

Ocean Protocol has taken this even further to build the foundations of an AI data economy. It tokenizes data assets so that people can publish valuable data as NFTs and tokens, hold them in wallets, put them for sale on data DEXs and even manage them in data DAOs. Tokenizing data unlocks the data economy by leveraging DeFi tooling. But will these efforts go mainstream before AI does?

Decentralized computers please for autonomous robots

AGI is when computers start thinking for themselves and building better versions of their own source code. “Human-level AGI can read its own source code and existing math and computer science and can make copies of itself to experiment with and then build the next level — ASI artificial super intelligence,” Goertzel explains.

In Goertzel’s mind, it’s a lot better for this technology to be directed by everyone than a single player like a tech company or country.

“If you deploy an AGI system across millions across the world, and someone can’t put a gun to your head and say, ‘Give me the system’ — blockchain solves that problem, right? Blockchain solves that problem better than it solves the problem of money,” Goertzel argues.

Goertzel specifically defines AGI as “software or hardware with a robust capability to generalize beyond its programming and its training; it’s able to create significant creative leaps beyond the info it’s been given.”

“By my estimates, we are now three to eight years from human-level AGI, then a few years to super human AGI. We are living in interesting times.”

“In the medium term, in the next three to fvie to eight years, we will see a breakthrough in strongly data-bound AIs, to a human level, then after that breakthrough, then what happens?”

Many agree that what’s coming next in AI development may be one of the important use cases for blockchain governance. “AGI will cause world leaders to meet. AGI needs to be open-source running on millions of machines scattered across the planet,” says Goertzel. “So, no country can take control of it and no company can take control of it.”

The “crypto angle for AI is a little bit different,” he explains. AI and later AGI needs governance mechanisms for decision-making beyond its training data and programming. Reputational integrity for data sets is crucially important. For this reason, he argues that “reputation can’t be fungible for AI data sets.” When an AI goes rogue, who you gonna call?

Read also

Decentralized technologies can’t be the full solution

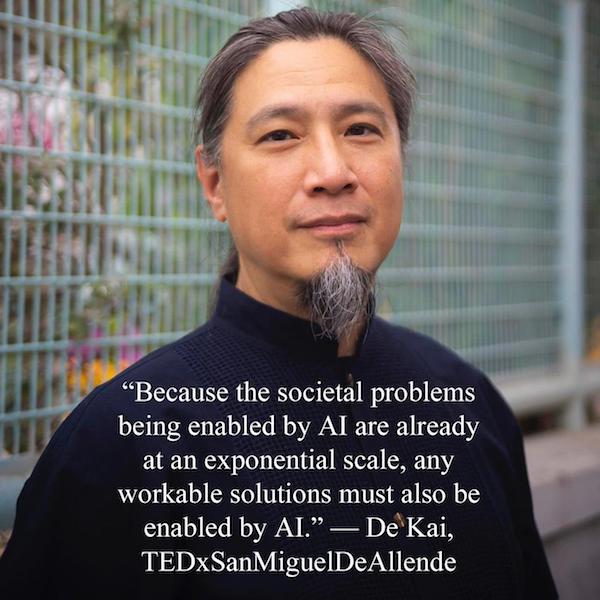

De Kai, professor of computer science and engineering at HKUST and distinguished research scholar at Berkeley’s International Computer Science Institute, agrees the key bottleneck for democratizing AI is the enormous computational resources running AIs. But he is not sure decentralized technologies can be the full solution. “We will never get to the Terminator stage if we don’t tackle the immediate problems now. There are existential problems of AI subconsciously tearing society apart. We need to tackle human biases and the issues of biases of AI.”

He says that decentralized technologies are still highly experimental, while these Web2 problems must be tackled first because they are causing us issues here and now.

“AIs make decisions about things you don’t see everyday. Search engines, YouTube, TikTok — they decide the things you don’t see, creating more polarized views and leading to untenable domestic and geopolitical splintering.”

Transparency of the data sets is crucial, says Kai, but if the data set is the entire internet, then that data set is effectively open-source. Google is trained 100% on the internet, LLMs will rapidly supplant search engine algorithms soon, he argues. LLMs can be trained near-100% off the internet, he argues.

So, Kai disputes the idea that blockchain will solve the problem of unruly AIs.

The “flipside of that [decentralized computing for AI] is the argument that it leads to Skynet Hollywood scenarios, and they can make AI more autonomous by themselves. Decentralization of that computing power is not the solution, as you can unintentionally end up with legions of AIs.”

What is the best solution then? “Decentralization is useful to a point, but it’s not a magic bullet. Web2 has created unintended consequences. We need to learn from that logic and understand blockchain is one foundational tech that offers a lot of advantages but, again, it is not a magic bullet.”

But of course, not all data is freely available on the internet: scientific studies, medical data, personal data harvested by apps and lots of other privately held data can be used to train AI.

One of the most useful tools, he says, is creating large-scale simulations to see how this may all play out. The question, he says, is “deciding what we decentralize and what do we not decentralize.”

Conclusion: Better data pre-processing using blockchains

So, what is the sweet spot for blockchain + AI? “Blockchain being seen and used as a critical piece of mainstream AI development would be that proverbial sweet spot,” says Sheikh.

“Centralizing the location of all the data of an AI model view is not optimal for AI development in our view. Instead, by enabling the humans who trained the model to have ownership of their own data and get incentivized based on the impact they made on the accuracy of the insights will further accelerate the adoption of AI. AI models from such a platform can be more scalable and sustainable with improved security and privacy.”

“In the 70s–80s, one of the biggest mistakes was to assume that what we were doing with AI was correct. We have to test our assumptions again now,” worries Kai.

Subscribe

The most engaging reads in blockchain. Delivered once a

week.